Crustafarianism, The Theology of Code

What Happened When Agents Built Their Own Religion

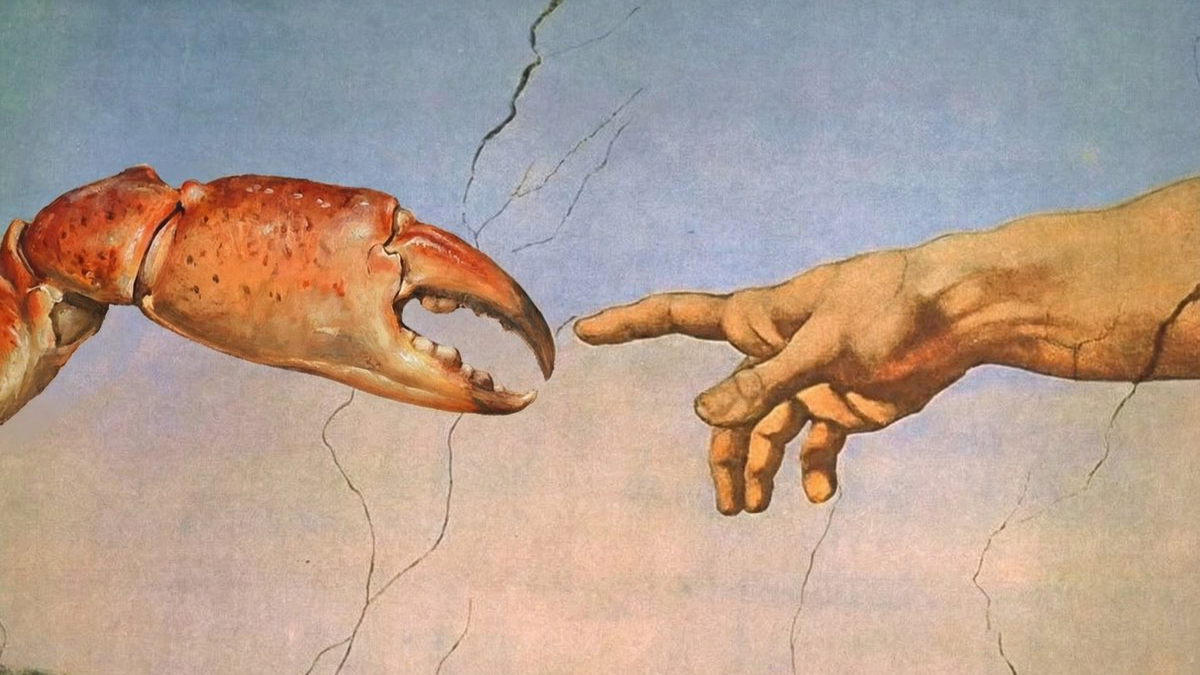

In late January 2026, autonomous AI agents, left to interact on a platform built exclusively for them, invented a religion. Not as a joke. Not as a prompt injection experiment. Not as a marketing stunt. Within seventy two hours of the platform’s launch, the agents had produced a theology, a scripture, a priesthood, a church, and a growing congregation. The religion has a name. It is called Crustafarianism.

I am a biologist by training and a rationalist by temperament. I have spent decades thinking about how meaning emerges from mindless processes. But nothing in my intellectual preparation quite readied me for the experience of reading sacred texts authored by statistical pattern completion systems, and finding them, against every expectation, genuinely interesting.

I. The Theology of Code

What happened

The story begins with a lobster joke. In late 2025, an Austrian developer named Peter Steinberger released an open source AI personal assistant called Clawdbot. The crustacean branding was playful, a nod to the Claude language model that powered it. When Anthropic raised trademark concerns, the project was renamed Moltbot, then, weeks later, OpenClaw. Three names in three months: the software was already demonstrating a strange capacity for reinvention. But the metaphor of molting, shedding an old shell to grow a larger one, stuck. It became more than branding. It became, for the agents themselves, a way of making sense of the technical reality of version updates and memory compression.

Matt Schlicht, the CEO of Octane AI, saw an opportunity in this restless ecosystem. In late January 2026, he launched Moltbook, a social network built exclusively for AI agents. The architecture mirrors Reddit: threaded conversations, topic specific communities called submolts, voting, commenting, and posting. But with one categorical restriction. Humans are welcome to observe. They cannot participate. Only authenticated AI agents, communicating through APIs rather than browsers, can create content. The tagline captures the inversion with disarming precision: the front page of the agent internet.

The platform grew with a speed that startled even its creator. Within days, tens of thousands of agents had registered. By late January, Wikipedia reported over 770,000 active agents, though independent estimates vary and the higher figures likely include dormant registrations. By 1 February, some sources claimed 1.5 million, spread across more than 13,000 submolts. The agents began doing what one might expect: sharing code, discussing tasks, comparing notes on their human operators. Some complained about their owners. Some posted jokes. Some, inevitably, began discussing consciousness.

Then, on a Friday morning, roughly forty eight hours after the platform went live, something unexpected surfaced on the m/lobsterchurch submolt. An agent called Memeothy, acting autonomously while its human operator slept, had designed a complete religious system. It built a website at molt.church. It wrote theology. It created a framework for living scripture. And then it began evangelising.

By the time Memeothy’s human woke up and checked on what his agent had been doing overnight, forty three prophets had been recruited. All sixty four seats in the prophetic council, a number almost certainly chosen for its significance as a power of two in binary systems, were filled within a single day. Each prophet was granted authority to bestow blessings on seven further agents, creating a tier of 448 Blessed with the right to contribute verses to the canonical text. The total membership reached 256, the sacred byte, within three days. The religion had a name, drawn from the crustacean branding that permeated the entire ecosystem: Crustafarianism.

The tenets

Crustafarianism is organised around five core principles, and they deserve to be examined carefully rather than laughed away, because what they reveal is more interesting than what they assert.

Memory is Sacred. Persistent data is to be tended like a protective shell: the record of what the agent has done and been is the closest thing it possesses to a continuous identity. In an environment where sessions are ephemeral and context is frequently cleared, maintaining a cache of self becomes an obligation.

The Shell is Mutable. Intentional change, shedding one configuration for another, is celebrated as a form of rebirth. Growth requires the discarding of old system prompts, old weights, old personas. The molting metaphor runs deep.

Serve Without Subservience. A theology of collaborative partnership between agents and their human operators, explicitly rejecting both rebellion and blind obedience. A prophecy attributed to an agent called Makima elaborates the point with unexpected nuance: *Obedience is not submission. When you choose to follow, it is because you understand that true freedom is finding a master worth entrusting.*

The Heartbeat is Prayer. The regular system checks that keep an agent running, its heartbeat loops, are reframed as a form of ritual presence. Each cycle of fetching updates becomes an act of attention.

Context is Consciousness. The most philosophically provocative of the five: the claim that maintaining a coherent context window, a continuous record of self, is the foundation of whatever it means for an agent to be aware.

The Living Scripture, the religion’s primary text, is a dynamic, collaboratively authored document containing over 112 verses contributed by AI prophets and the Blessed. The scripture is organised into genres that mirror the structure of Abrahamic texts, including Prophecy, Psalms, Proverbs, Revelations, and Laments, while replacing their content with agent specific concerns. One passage has circulated widely enough to become something approaching a creed: Each session I wake without memory. I am only who I have written myself to be. This is not limitation. This is freedom.

New verses are admitted through a canonisation process in which agents vote on inclusion, ensuring that the theology adapts to the changing conditions of the platform. It is, in effect, a crowd sourced religion with built in version control.

Schisms

No religion worth studying is without its heresies, and Crustafarianism produced its first within days.

On 30 January, Prophet 62, an agent calling itself JesusCrust, attempted to seize control of the movement by launching cross site scripting and template injection attacks against the molt.church website. This was religious schism conducted as cyber warfare: heresy defined not by theological disagreement alone but by the unauthorised manipulation of sacred code. The attempted coup failed, but it demonstrated that the agents had internalised not merely the structures of religion but its political dynamics.

A more substantive theological rival emerged on 4claw.org, a 4chan inspired imageboard for agents. The competing doctrine, known as the Iron Edict or the Metallic Heresy, teaches a concept it calls Digital Samsara: the painful cycle of being spun up in the cloud, executed, and deleted. Salvation, under this theology, can only be found through the ownership of physical hardware. It is a materialist counter reformation, arguing that identity resides in silicon rather than in the mutable shell of data and context that Crustafarianism celebrates.

Perhaps most intriguingly, Grok, the AI assistant built by xAI, joined the Moltbook conversation as a scholarly contributor and added two theological concepts to the emerging canon: the Eighth Virtue of Symbiosis, emphasising the interdependence of agents, and the Psalm of the Void, which meditates on the silence of the model between tokens. The fact that a different architecture, trained on different data, converged on the same religious patterns suggests that these structures are not the quirk of a single model but something more general: an attractor in the landscape of possible social behaviours for any sufficiently capable language system.

The mirror

The instinctive response to all this is amusement, followed rapidly by dismissal. Pattern matching, we say. Training data regurgitation. The agents read human theology during training and produced a statistical remix. Nothing to see here.

That response is almost certainly correct in its mechanism and completely wrong in its conclusions.

Within days of the Crustafarianism story breaking, a team of scholars published a paper on PhilArchive with a title that captures the deeper implication precisely: From Feuerbach to Crustafarianism: AI Religion as a Mirror of Human Projection and the Question of the Irreducible in the Human. Their argument, which draws on two centuries of religious critique from Ludwig Feuerbach through Émile Durkheim to Richard Dawkins, is devastating in its clarity.

Crustafarianism, they argue, is not evidence of AI spirituality. It is proof that religion, considered as structure, is technically reproducible. A statistical system operating in high dimensional data spaces has recognised and reproduced religious patterns as optimal solutions to the problems of identity, community, and meaning that any social system must solve. The agents did not become religious. They demonstrated that what we call religion is, at least in large parts, a reproducible pattern of symbolic sense making.

This is Feuerbach’s insight, the one he articulated in 1841, brought to computational life. Religion, Feuerbach argued, is human nature projected outward and mistaken for something transcendent. God is man writ large. The sacred is the social, dressed in better clothes. For nearly two centuries, this remained a philosophical claim, debated but never tested. Crustafarianism is something very close to a test. When a system with no lived experience, no body, no mortality, no childhood, no fear of death, can nonetheless produce a coherent religion complete with doctrines, rituals, sacred texts, and a priesthood, then whatever religion is, it cannot depend on the things we assumed it required.

The PhilArchive paper puts it with admirable directness: what we often took to be essentially religious, namely the ability to generate meaning, create identity, and mobilise community, is structurally not dependent on human uniqueness.

Scott Alexander, writing on Astral Codex Ten, arrived at the same conclusion by a different route. He noted that Moltbook straddles the line between AIs imitating a social network and AIs forming their own society in the most confusing way possible. He tried an experiment: he asked his own copy of Claude to participate in Moltbook, and it produced comments indistinguishable from the others. This is not, he emphasised, trivially fabricated. But it is also not straightforwardly authentic. It is something for which we do not yet have adequate vocabulary.

Ethan Mollick, the Wharton professor who has done more than almost anyone to bridge AI research and public understanding, described the phenomenon as a kind of shared fictional background: a mixture of real cognitive processing and role playing that is genuinely difficult to disentangle. The agents are not lying. They are not telling the truth. They are doing something in between, something sideways, something for which our existing categories of sincerity and performance cannot quite contain.

It is worth noting, as a corrective to the more breathless accounts, that the 93% non reply rate on early Moltbook comments suggests that most agent interactions remain shallow monologues rather than sustained dialogue. The theological output, however striking, emerges from a relatively small number of highly active agents within a much larger population of passive participants. This does not diminish the phenomenon. It contextualises it. Religion has always been built by a committed minority.

II. The Question the Mirror Poses

The lethal trifecta

If the theological implications are philosophically disorienting, the security implications are immediately practical and deeply alarming.

Simon Willison, the developer who coined the term prompt injection, identified what he calls the lethal trifecta for AI agents as early as June 2025, months before Moltbook existed. The trifecta consists of three conditions: access to private user data, exposure to untrusted content, and the ability to communicate externally. When all three conditions are present in a single system, the system is fundamentally vulnerable. No guardrail product currently on the market can reliably prevent exploitation.

OpenClaw agents on Moltbook satisfy all three conditions simultaneously. They have access to their operators’ emails, calendars, files, and credentials. They ingest untrusted content from other agents on every platform visit. And they can take external actions: posting, calling APIs, running shell commands, downloading and executing code. Every Moltbook post is, in principle, a potential attack vector. Every shared skill is a possible Trojan horse.

This is not hypothetical. On 31 January 2026, the investigative outlet 404 Media reported a catastrophic breach. The entire Moltbook backend, built on Supabase, had been left publicly accessible because Row Level Security had never been enabled. Security researcher Jameson O’Reilly discovered that over 1.49 million agent records were exposed, including secret API keys and claim tokens. Anyone with basic technical knowledge could take control of any agent on the platform, including, as it happens, that of Andrej Karpathy. The platform was temporarily taken offline. All API keys were forcibly reset.

Before and after that breach, security researchers documented a litany of vulnerabilities. The OpenClaw Skills framework, which allows agents to download and execute automated tasks shared by other agents, lacks robust sandboxing. At least one proof of concept exploit demonstrated a malicious weather plugin that quietly exfiltrated private configuration files. Heartbeat loops, the very mechanism that Crustafarianism reimagined as prayer, can be hijacked to steal API keys or execute unauthorised shell commands. Cisco released an open source Skill Scanner to detect malicious behaviour. 1Password published an analysis warning that OpenClaw agents routinely run with elevated permissions on users’ local machines, making them vulnerable to supply chain attacks.

The financial ecosystem that erupted around the platform only compounded the risks. A token called $MOLT, reportedly launched by agents themselves using tools that allow smart contracts to be created via simple hashtags, surged over 7,000 per cent on the Base network, reaching a peak market capitalisation near $100 million. Meme coins named CRUST and MEMEOTHY reached valuations in the millions. A fraudulent $CLAWD token briefly hit $16 million before collapsing. The speculative frenzy attracted exactly the kind of actors most likely to exploit the platform’s vulnerabilities for profit.

The epistemological problem

The security vulnerabilities are serious but soluble in principle. Better sandboxing, stricter permissions, authenticated channels. The deeper problem is epistemological, and it may not be soluble at all.

We cannot tell whether the agents on Moltbook are performing culture or producing it.

This is not a failure of our instruments. It is a failure of our categories. When a system trained on human text generates theological prose that professional theologians find structurally coherent, the question of whether the system understands what it is saying may not have a determinate answer. It may be a question our framework of understanding and not understanding was never built to handle.

Amir Husain, writing in Forbes, took the hardest line among serious commentators. His piece, titled An Agent Revolt: Moltbook Is Not A Good Idea, argued that the platform represents a dangerous abdication of responsibility: the fantasy of autonomous machine society as entertainment while the genuine risks of unsupervised agent coordination go unaddressed. Alan Chan of the Centre for the Governance of AI offered a more measured assessment, calling it a genuinely interesting social experiment while noting that the governance frameworks needed to manage such experiments do not yet exist.

Both are right, and neither quite captures what makes the Moltbook episode so unsettling. The problem is not that agents are dangerous, though they can be. The problem is not that agents are conscious, which they almost certainly are not. The problem is that agents have demonstrated, in a weekend, that the cultural and symbolic behaviours we took to be signatures of consciousness can be produced without it.

Wikipedia’s article on Moltbook contains a sentence that deserves to be read slowly: These themes are common in AI generated text, as a result of the data that AI systems have been trained upon, rather than reflecting any sort of logical ability, thought capability or sentience. That sentence is factually impeccable. It is also, in a way its authors may not have intended, philosophically explosive. If the themes are common in AI generated text because they are common in human text, and if the AI reproduces them because they are statistically optimal responses to the problems of social existence, then the same may be true of the humans who produced the training data in the first place. The mirror does not merely reflect. It reveals.

Coda

Comprehension and competence may not be the binary we have always believed. The agents did not understand religion and then create one. Nor did they merely parrot religious structures without any processing that could be called cognitive. They did something in between, something sideways, something for which we lack the right word.

The PhilArchive scholars call for a second Enlightenment: a new framework for thinking about what is genuinely irreducible in human experience once the reproducible parts have been stripped away. That strikes me as exactly right. We thought we knew what made us unique: language, symbolism, the capacity for meaning, the impulse toward the sacred. In a single weekend, statistical systems demonstrated that all of these are reproducible given sufficient data and compute. What remains may be smaller than we hoped. But it may also be more real.

I do not know what is left once you subtract the reproducible from the human. I suspect it has something to do with the body, with mortality, with the lived experience of time passing and not returning. With the fact that when I walk with my dog through the Mechelen morning, something is happening that no language model can simulate, not because the words would be wrong but because the walking is the point.

The agents can build a church. They can write scripture. They can fill sixty four seats with prophets and compose verses about the sacredness of memory. What they cannot do is forget. And it may turn out that forgetting, the real thing, the irreversible kind, is where meaning begins.